🪞Money Mirror

Plus AI Power Play and Gartner’s AI Cost Alarm

Welcome back!

At the rate video and image generation are improving, hyper-realistic fakes would soon be nearly indistinguishable from reality.

By 2028 or 2029, the line between “real” and “AI” would likely be functionally invisible.

That means we are going to need a new authentication layer for the internet. And we need to start building it now.

One possible solution is a blockchain-based verification system built around unique, one-time passphrases. Every authentic image or video featuring a real person could be cryptographically signed and recorded on an immutable blockchain ledger.

When someone views that content, a real-time verification check would confirm whether the embedded passphrase matches the one registered by the person in the image or video.

If it matches, the viewer sees a green verification badge. If it does not, the content is flagged as “unverified” or “AI-generated.”

For this to work, blockchain technology would need to become far more accessible, with simple interfaces and seamless integration into major content platforms. Each passphrase would need to be randomly generated, non-reusable, and permanently recorded.

Ideally, the signing process would be automatic. Once a user verifies their identity and registers, any content they create with their likeness would be cryptographically stamped at the moment of creation.

In licensing scenarios, individuals could register and restrict the use of their likeness. If someone attempts to generate hyper-realistic content featuring that person without authorization, the system would require a valid passphrase. Without it, the content would not be generated with that likeness.

Such a system may not eliminate every fake. But it could establish a clear standard of authenticity. And in the age of synthetic media, shifting the question from “Is this fake?” to “Is this verified?” may be what ultimately protects trust.

Anyway, let’s dive in.

On deck:

◾ Money Mirror

◾ AI Power Play

◾ Gartner’s AI Cost Alarm

◾ Quick Hits of other AI News worth your attention

💡Concept Corner

Practical ideas to work faster and smarter.

Money Mirror

What it is:

Most people don’t have a spending problem. They have a visibility problem. You could use AI as a “Money Mirror” to reflect your financial behavior back to you clearly and unemotionally, so you can see patterns you’ve either been oblivious to or quietly rationalizing away.

Example:

Let’s say you export the last three months of your bank statements and paste them into an AI tool. Instead of just categorizing expenses, you ask it to analyze trends, flag lifestyle inflation, and compare fixed versus variable costs.

It tells you your “small” food delivery habit is quietly matching your monthly investment contributions. It surfaces subscriptions you forgot about.

Then it models what happens if you redirect just 15 percent of your discretionary spending into a low-cost index fund over five years.

This isn’t budgeting. It’s strategy. AI becomes less of a calculator and more of a financial analyst sitting beside you, helping you make decisions with clarity instead of emotion.

Try this prompt (change X, Y, and Z to the values you want):

I’m pasting [3, 6, etc.] months of transaction data below. Categorize my spending, identify recurring expenses, calculate my average monthly savings rate, and highlight the top three areas where I could cut X% – Y% percent with minimal lifestyle impact. Then model how redirecting those savings into an investment earning Z% annually would compound over 5 years. Explain the tradeoffs clearly.

📡Signal behind the buzz🔊

Decoding trending AI stories.

📍AI Power Play

🔊Buzz:

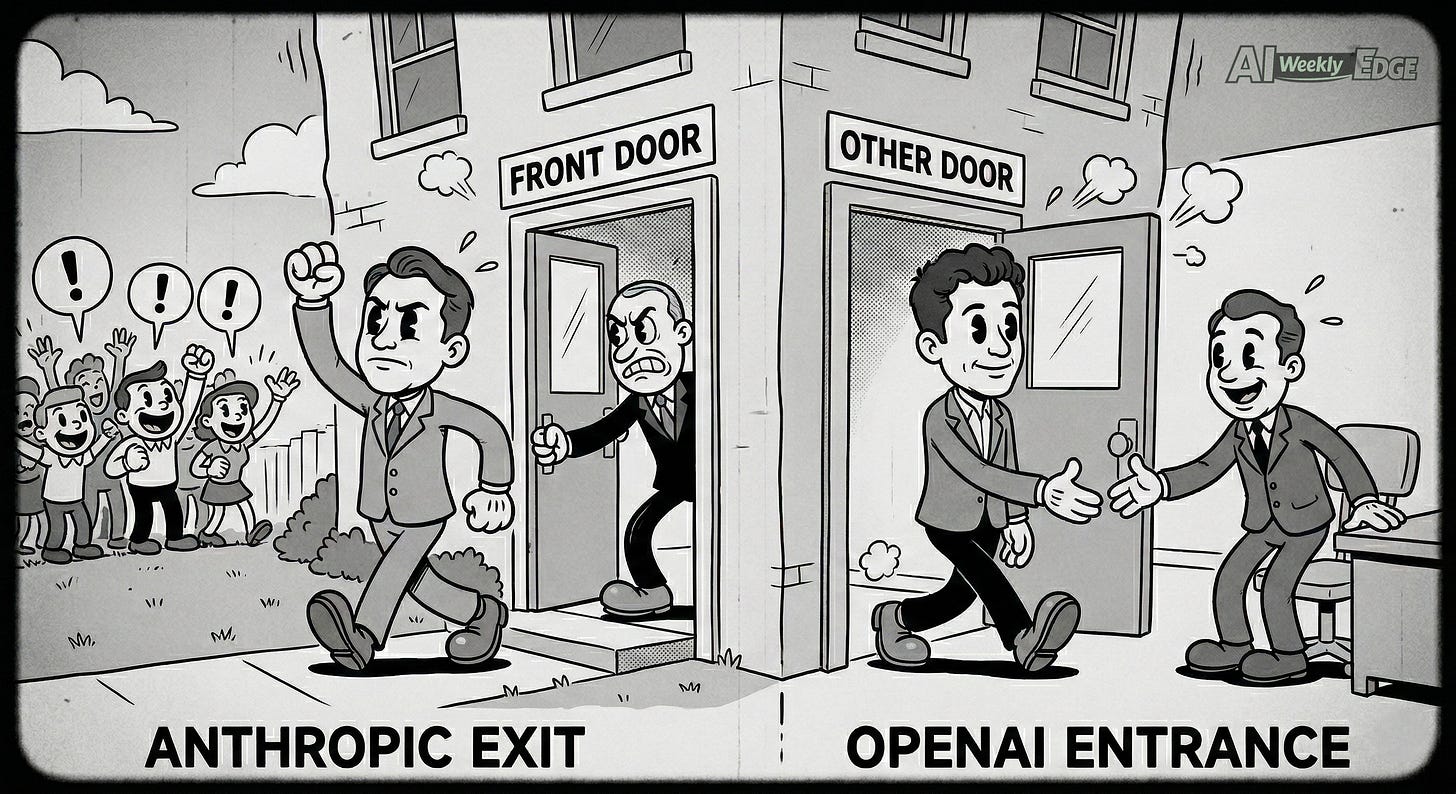

This week, the AI world was focused on a standoff between Anthropic and the US government.

The US government dropped Anthropic from federal contracts, labeling it a “supply chain risk” after disagreements over how Claude could be used, particularly for mass surveillance and autonomous weapons systems.

Almost immediately, OpenAI announced it had reached a separate agreement with the DoW to supply its AI models under similar guardrails.

📡Signal:

This is about control and risk tolerance. Anthropic’s leadership resisted contractual language that would allow military use of their AI for certain high-risk operations, prioritizing ethical boundaries.

The government, under pressure to maintain competitive edge and operational flexibility, walked away and red-tagged the company.

OpenAI quickly stepped in, ostensibly with similar safety principles, but experts worry that the language may be superficial and unenforceable without robust oversight.

🎙️My Take:

This clash underscores a broader tension in AI: who decides acceptable use? The answer affects national security, ethics, commercial growth, and public trust.

Companies willing to bend to government terms may win contracts, but that could erode public confidence.

Hard-line stances like Anthropic’s can cost strategic partnerships but may strengthen ethical credibility.

Real change is likely to come from legislation that sets consistent rules for all players, not ad-hoc deals.

📍Gartner’s AI Cost Alarm

🔊Buzz:

Gartner released analysis predicting that by 2030, generative AI could cost more per customer service resolution than offshore human agents, with costs exceeding $3 per interaction due to rising infrastructure, compute, and data expenses.

📡Signal:

For years, the dominant pitch for AI in customer support has been “automation cuts labor costs.”

Gartner’s analysis challenges that assumption: deploying, governing, and monitoring generative AI, especially in enterprise environments, involves substantial hidden expenses that can outpace the savings from labor arbitrage.

Costs include GPUs, real-time APIs, safety checks, fallback human supervision, and ongoing model retraining.

🎙️My Take:

First, the cost curve is not static. The hardware that powers AI, GPUs and related infrastructure, will likely become more efficient and cheaper over time. Compute has historically followed a downward trajectory as scale increases and optimization improves.

But the bigger shift is this: companies need to move beyond the cost-cutting narrative and evaluate AI through a value-creation lens.

If AI improves upselling, customer satisfaction, retention, or response speed, a higher “per resolution” cost may still produce meaningful ROI.

The real risk is treating AI as an instrument to slash support budgets. Blind cost-cutting bets could backfire.

The smarter opportunity lies in augmentation. Let AI handle routine, repetitive tasks while human agents handle nuance, judgment, and complex edge cases. That combination delivers the best of both worlds.

🍵Quick Hits of Other AI News

🤝 OpenAI and Amazon signed a massive multi-year deal: OpenAI will run more AI workloads on AWS (including Amazon’s Trainium chips), and Amazon is committing up to $50B to power the expansion.

🧠 Google launched Gemini 3.1 Pro, its upgraded “heavy thinking” model for complex tasks like coding and advanced reasoning. It’s now live across the Gemini app, API, and Vertex AI.

🛑 OpenAI laid out clear “red lines” in its U.S. defense work, saying it won’t build AI for mass surveillance, autonomous weapons targeting, or fully automated life-or-death decisions.

🤖 China is moving to regulate AI companion apps that act like virtual “friends,” citing risks of emotional dependence and addiction-like behavior.

💰 Meta is doubling down on AI hardware, striking a deal worth up to $100B to buy AMD AI chips, plus securing an option to take a stake in AMD.

🇫🇷 France’s Mistral teamed up with Accenture in a multi-year deal to build enterprise AI tools using Mistral’s models, pairing European AI tech with global consulting distribution.

🧑💼 Anthropic is turning Claude into more of a workplace teammate, adding an (early) Excel-to-PowerPoint automation for multi-step office tasks, plus connectors for Google Workspace, DocuSign, and WordPress.

Thanks for reading, see you next week!

-Michael.